| .github | ||

| embeddings | ||

| extensions | ||

| javascript | ||

| localizations | ||

| models | ||

| modules | ||

| scripts | ||

| textual_inversion_templates | ||

| .gitignore | ||

| .pylintrc | ||

| artists.csv | ||

| CODEOWNERS | ||

| environment-wsl2.yaml | ||

| launch.py | ||

| README.md | ||

| requirements_versions.txt | ||

| requirements.txt | ||

| screenshot.png | ||

| script.js | ||

| style.css | ||

| txt2img_Screenshot.png | ||

| webui-user.bat | ||

| webui-user.sh | ||

| webui.bat | ||

| webui.py | ||

| webui.sh | ||

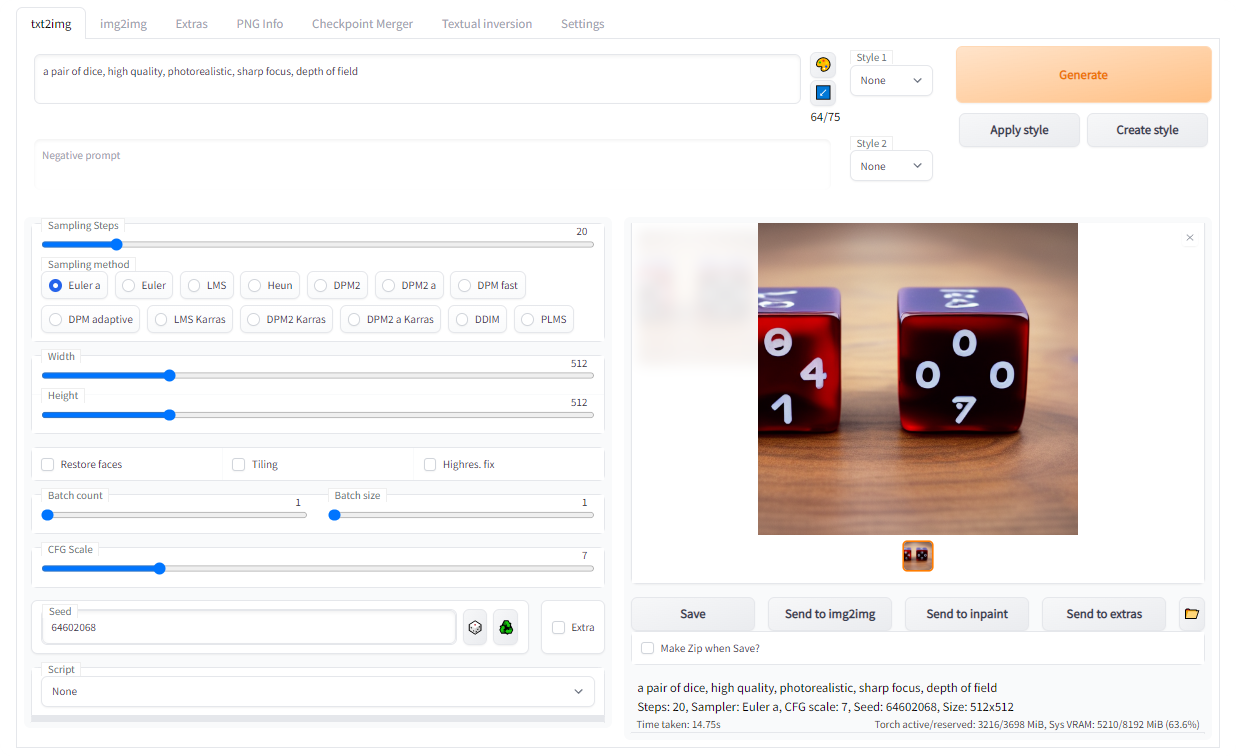

Stable Diffusion web UI

A browser interface based on Gradio library for Stable Diffusion.

Check the custom scripts wiki page for extra scripts developed by users.

Features

Detailed feature showcase with images:

- Original txt2img and img2img modes

- One click install and run script (but you still must install python and git)

- Outpainting

- Inpainting

- Color Sketch

- Prompt Matrix

- Stable Diffusion Upscale

- Attention, specify parts of text that the model should pay more attention to

- a man in a ((tuxedo)) - will pay more attention to tuxedo

- a man in a (tuxedo:1.21) - alternative syntax

- select text and press ctrl+up or ctrl+down to automatically adjust attention to selected text (code contributed by anonymous user)

- Loopback, run img2img processing multiple times

- X/Y plot, a way to draw a 2 dimensional plot of images with different parameters

- Textual Inversion

- have as many embeddings as you want and use any names you like for them

- use multiple embeddings with different numbers of vectors per token

- works with half precision floating point numbers

- train embeddings on 8GB (also reports of 6GB working)

- Extras tab with:

- GFPGAN, neural network that fixes faces

- CodeFormer, face restoration tool as an alternative to GFPGAN

- RealESRGAN, neural network upscaler

- ESRGAN, neural network upscaler with a lot of third party models

- SwinIR and Swin2SR(see here), neural network upscalers

- LDSR, Latent diffusion super resolution upscaling

- Resizing aspect ratio options

- Sampling method selection

- Adjust sampler eta values (noise multiplier)

- More advanced noise setting options

- Interrupt processing at any time

- 4GB video card support (also reports of 2GB working)

- Correct seeds for batches

- Live prompt token length validation

- Generation parameters

- parameters you used to generate images are saved with that image

- in PNG chunks for PNG, in EXIF for JPEG

- can drag the image to PNG info tab to restore generation parameters and automatically copy them into UI

- can be disabled in settings

- drag and drop an image/text-parameters to promptbox

- Read Generation Parameters Button, loads parameters in promptbox to UI

- Settings page

- Running arbitrary python code from UI (must run with --allow-code to enable)

- Mouseover hints for most UI elements

- Possible to change defaults/mix/max/step values for UI elements via text config

- Random artist button

- Tiling support, a checkbox to create images that can be tiled like textures

- Progress bar and live image generation preview

- Negative prompt, an extra text field that allows you to list what you don't want to see in generated image

- Styles, a way to save part of prompt and easily apply them via dropdown later

- Variations, a way to generate same image but with tiny differences

- Seed resizing, a way to generate same image but at slightly different resolution

- CLIP interrogator, a button that tries to guess prompt from an image

- Prompt Editing, a way to change prompt mid-generation, say to start making a watermelon and switch to anime girl midway

- Batch Processing, process a group of files using img2img

- Img2img Alternative, reverse Euler method of cross attention control

- Highres Fix, a convenience option to produce high resolution pictures in one click without usual distortions

- Reloading checkpoints on the fly

- Checkpoint Merger, a tab that allows you to merge up to 3 checkpoints into one

- Custom scripts with many extensions from community

- Composable-Diffusion, a way to use multiple prompts at once

- separate prompts using uppercase

AND - also supports weights for prompts:

a cat :1.2 AND a dog AND a penguin :2.2

- separate prompts using uppercase

- No token limit for prompts (original stable diffusion lets you use up to 75 tokens)

- DeepDanbooru integration, creates danbooru style tags for anime prompts (add --deepdanbooru to commandline args)

- xformers, major speed increase for select cards: (add --xformers to commandline args)

- via extension: History tab: view, direct and delete images conveniently within the UI

- Generate forever option

- Training tab

- hypernetworks and embeddings options

- Preprocessing images: cropping, mirroring, autotagging using BLIP or deepdanbooru (for anime)

- Clip skip

- Use Hypernetworks

- Use VAEs

- Estimated completion time in progress bar

- API

- Support for dedicated inpainting model by RunwayML.

- via extension: Aesthetic Gradients, a way to generate images with a specific aesthetic by using clip images embds (implementation of https://github.com/vicgalle/stable-diffusion-aesthetic-gradients)

Where are Aesthetic Gradients?!?!

Aesthetic Gradients are now an extension. You can install it using git:

git clone https://github.com/AUTOMATIC1111/stable-diffusion-webui-aesthetic-gradients extensions/aesthetic-gradients

After running this command, make sure that you have aesthetic-gradients dir in webui's extensions directory and restart

the UI. The interface for Aesthetic Gradients should appear exactly the same as it was.

Where is History/Image browser?!?!

Image browser is now an extension. You can install it using git:

git clone https://github.com/yfszzx/stable-diffusion-webui-images-browser extensions/images-browser

After running this command, make sure that you have images-browser dir in webui's extensions directory and restart

the UI. The interface for Image browser should appear exactly the same as it was.

Installation and Running

Make sure the required dependencies are met and follow the instructions available for both NVidia (recommended) and AMD GPUs.

Alternatively, use online services (like Google Colab):

Automatic Installation on Windows

- Install Python 3.10.6, checking "Add Python to PATH"

- Install git.

- Download the stable-diffusion-webui repository, for example by running

git clone https://github.com/AUTOMATIC1111/stable-diffusion-webui.git. - Place

model.ckptin themodelsdirectory (see dependencies for where to get it). - (Optional) Place

GFPGANv1.4.pthin the base directory, alongsidewebui.py(see dependencies for where to get it). - Run

webui-user.batfrom Windows Explorer as normal, non-administrator, user.

Automatic Installation on Linux

- Install the dependencies:

# Debian-based:

sudo apt install wget git python3 python3-venv

# Red Hat-based:

sudo dnf install wget git python3

# Arch-based:

sudo pacman -S wget git python3

- To install in

/home/$(whoami)/stable-diffusion-webui/, run:

bash <(wget -qO- https://raw.githubusercontent.com/AUTOMATIC1111/stable-diffusion-webui/master/webui.sh)

Installation on Apple Silicon

Find the instructions here.

Contributing

Here's how to add code to this repo: Contributing

Documentation

The documentation was moved from this README over to the project's wiki.

Credits

- Stable Diffusion - https://github.com/CompVis/stable-diffusion, https://github.com/CompVis/taming-transformers

- k-diffusion - https://github.com/crowsonkb/k-diffusion.git

- GFPGAN - https://github.com/TencentARC/GFPGAN.git

- CodeFormer - https://github.com/sczhou/CodeFormer

- ESRGAN - https://github.com/xinntao/ESRGAN

- SwinIR - https://github.com/JingyunLiang/SwinIR

- Swin2SR - https://github.com/mv-lab/swin2sr

- LDSR - https://github.com/Hafiidz/latent-diffusion

- Ideas for optimizations - https://github.com/basujindal/stable-diffusion

- Doggettx - Cross Attention layer optimization - https://github.com/Doggettx/stable-diffusion, original idea for prompt editing.

- InvokeAI, lstein - Cross Attention layer optimization - https://github.com/invoke-ai/InvokeAI (originally http://github.com/lstein/stable-diffusion)

- Rinon Gal - Textual Inversion - https://github.com/rinongal/textual_inversion (we're not using his code, but we are using his ideas).

- Idea for SD upscale - https://github.com/jquesnelle/txt2imghd

- Noise generation for outpainting mk2 - https://github.com/parlance-zz/g-diffuser-bot

- CLIP interrogator idea and borrowing some code - https://github.com/pharmapsychotic/clip-interrogator

- Idea for Composable Diffusion - https://github.com/energy-based-model/Compositional-Visual-Generation-with-Composable-Diffusion-Models-PyTorch

- xformers - https://github.com/facebookresearch/xformers

- DeepDanbooru - interrogator for anime diffusers https://github.com/KichangKim/DeepDanbooru

- Initial Gradio script - posted on 4chan by an Anonymous user. Thank you Anonymous user.

- (You)